The best test data tool: build or buy?

How to choose the right test data management tool?

You’re thinking about Test Data Management and you’ve searched the internet. Today there are multiple vendors offering a ‘test data management’ solution. This may differ from data virtualisation to subsetting, masking and generation. All you want is a better test data availability and a way of working with test data management that suits the current state of software development (DevOps, Agile etc.) better. So should you consider buying a test data tool or should you build one yourself?

Buy or build a test data management tool?

In all probability you came across some proprietary software vendors, some of them really expensive (Delphix and IBM), some are aquired by others (CA Broadcom) and some cease to exist (Imperva Camouflage). This makes it even harder to choose. Maybe you’re thinking about building an application of your own or using an open source test data management tool. This is always an interesting topic and in this blog we want to share with you some things to consider while making such a decision about buying a test data tool or building one.

1. Complexity of databases

If you want to make a decision about buying or building a solution, the complexity of your database is an important one. Simply put: the higher the complexity of your database, the higher the need for a proprietary tool. Why?

Building and maintaining a solution for a simple database (<100 tables) is doable. But if the database and the data model is getting more complex it gets a bit more complicated. The complexity of a database can be measured by for example the number of tables, columns and the availability of foreign keys.

Building a solution yourself is one part of the story, maintaining it is another. Who is going to maintain the masking or subsetting? What happens if privacy authorities find your masking insufficient? Is it possible to easily add or change your masking? What happens if the person who built the solution leaves and takes all the knowledge with him/her?

2. Number of features

Imagine that you have a small database. Then it is probably feasible to build a masking application yourself. But can you also create a data virtualization or subsetting application? Maybe you can, but is this still cheaper than buying an off the shell product, taking in to account that it might cost 2 to 3 fulltime jobs to build something yourself?

We believe you should really start considering buying a ready-made tool if you’ve got multiple requirements to meet.

3. Number of database technologies

The number of technologies is also a factor. If you only got one database type (e.g. Oracle or SQL Server) then maybe you could consider building an application for it yourself. If you’ve got multiple database technologies it gets more difficult straight away.

The time it takes to develop an application for masking and subsetting test data or create virtual test data will increase significantly if you have more than one database technology. I really wouldn’t advise you to start building it yourself, especially compared to our pricing.

4. Development rate

If your software development rate is high you’ll probably create a lot of features. But new features can cause multiple changes is your database. Changes in your database have an impact on your test data management; new data, new tables, new columns. This is great, but it also pressures the maintenance of your (possible) self-developed application. This can cause a lot of trouble and will take up a lot of time for your (one-man) team (if he is still working for you).

5. New versions of DB

Then we’ve got Microsoft, Oracle, IBM and/or whatever kind of database vendor you might have. These organisations won’t stop developing on their database technologies. We all want that Oracle and others keeps developing new versions! So new versions of your databases will be released. But does this have any impact on your self-developed test data solution? What will happen, who’s responsible? This may be challenging and thus expensive.

Conclusion

The main thing to keep in mind is how much effort it will cost to build vs to buying a test data tool or a product in itself. Building a product can be costly in terms of time and money. You’ll probably need multiple developers for the dev-phase and what are the costs? Multiple salaries? Compared to buying a product? This is what you should consider. The conclusions we’ve made in multiple cases is: with the purchase of our tools, the return on investment is – on average – one year.

Download paper

“7 tips for choosing the right TDM tool”

The fact that you are looking for a TDM solution is great in itself. High quality test data management can help you save a lot of time and money. However, it can be difficult to choose the right tool.

Here is a comparison paper with 7 tips for choosing the right test data tool to help you make the right decision.

"*" indicates required fields

About DATPROF

DATPROF is a software vendor developing and distributing its test data tool set. One of the problems we solve is that under privacy regulations in Europe it is no longer allowed to copy production databases for test purposes. Another challenge is the size (often terabytes) and duration of a copy of a production database: the test and acceptance environment are often too large and outdated. And the test data automation part of a test data tool. The availablity and the accessibility of test data is massively increased with automation test data to downstream environments using automation.

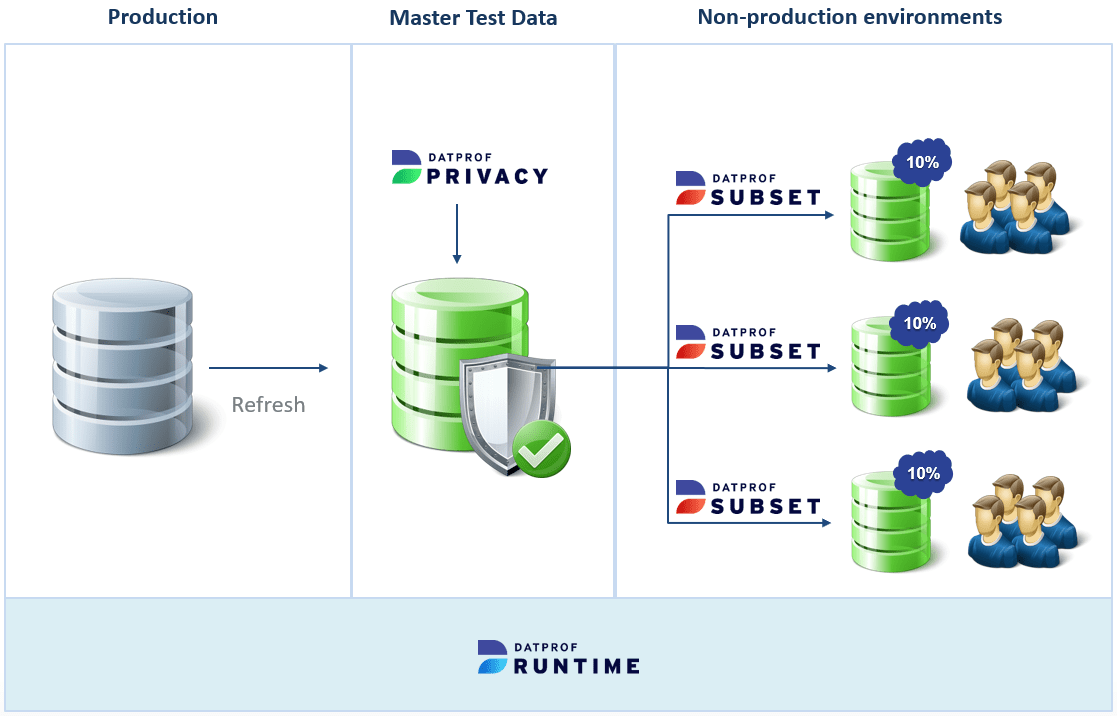

Our TDM solution has 3 mayor components with DATPROF Runtime being the heart of this solution. The central dashboard controls all the test data sources and integrations. This environment is filled with anonymization, generation, subsetting, and integration templates. Below you see a typical example of how these components work together: the production refresh is masked in a secure environment and then (subsetted) distributed to the non-production environments.

DATPROF Runtime is where scrum team members have access to the test data self-service portal and where the privacy officers have access to the GDPR proof audit reports. If you are a test automation experts you have access to the integration apps and can realize your own automation with the DATPROF API. In short: administrators have all the tools for easy maintenance available and management has full control over the entire landscape.

The template-based solution DATPROF Privacy helps you to be compliant by anonymizing and sanitizing copies of production databases making them compliant to the European privacy law (GDPR) as well as US Healthcare rules (HIPAA). You have access to a variety of masking options such as shuffling, scrambling and value lookups. With the custom expression library, you can apply just about any masking technique thinkable.

The template solution DATPROF Subset not only brings speed to the distribution and refresh rate but is also a privacy measure: leaking less personal data is a smaller risk. With just a few percent of the data you can cover all of the test cases while realizing mayor savings on storage costs.

Our TDM Tools Get The Job Done

DATPROF enables software teams to ship their software faster with better quality

Discover & Learn

Discover your data and gain analytics of the data quality by profiling and analyzing your application databases.

→

Mask & Generate

Enable test teams with high quality masked production data and synthetically generated data for compliance.

→

Subset & Provision

Subset the right amount of test data and reduce the storage costs and wait times for new test environments.

→

Integrate & Automate

Provide each team with the right test data using the self service portal or automate test data with the built-in API.